Strava power estimation: Cortland Hurl

The Cortland Hurl is the only significant climb on the SF2G Bayway route. According to Strava, it gains 25 meters in 400 meters, an average grade of 6.3%, although the grade is non-uniform. It starts out fairly gradual, then steepens, then gets gradual again towards the top. I like to make a good effort here when I'm feeling good during morning commutes. Typically I'm behind at the top of the steep bit, but I tend to do fairly well on the final gradual portion. If I'm having a good day, depending on who's there and how they're riding, I have a chance to be first to the top.

I've not ridden with a power meter for a year now. I sort of lost interest: I just like riding my bike and I don't care what the power meter data are, so why carry around a heavy, expensive Powertap wheel? Strava gives me a fairly good idea how I'm doing with its segment timings.

However, in addition to speed numbers Strava also produces power estimates. In fact, it will use these estimates for reporting a rider's best effort over different time intervals. I have suggested to them this is a mistake: only power meter numbers should be used for this purpose, since their estimate is unreliable.

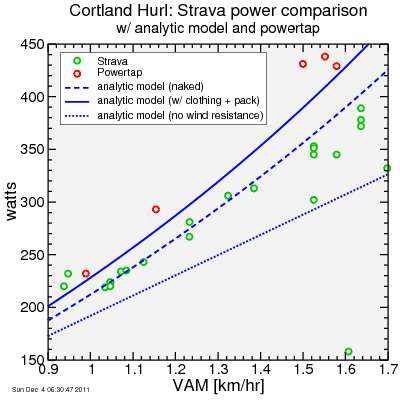

Since I had a lot of data for Cortland (28 rides + one I reject because I dropped my keys along the way and had to turn back to fetch them), I figured it would be interesting to compare Strava power to PowerTap power, plotted versus VAM which is considered a decent surrogate for power/mass ratio on climbs. I also compare these with "hand calculations" using the usual power-speed model. Here's the result:

The analytic calculations assume constant speed and constant grade and no net acceleration. Assuming start and finish speed are the same, they are thus a lower bound estimate given the assumptions used. For assumptions, I show three. One is using a "realistic" estimate for total mass and for CdA, the coefficient for wind resistance power. For this one I set my body mass to 57 kg, my bike mass to 8 kg, but then added in 4 kg for equipment and clothing and what I was carrying on my back. I assume a 0.5% coefficient of rolling resistance. CdA was set to 0.5 meters squared. For a second estimate, I eliminated the clothing + equipment mass and reduce CdA to 0.4 meters squared, assuming no back pack. In a final estimate I eliminate all wind resistance.

As can be seen in the plot, the Powertap measurements are almost always more than the Strava estimates. Strava estimates start out fairly well aligned with the zero-equipment-mass ("naked") estimate of power, except for my fastest runs where the Strava estimates become much lower, dropping as low as the zero-wind-resistance estimates. There's a few Strava estimates which are clearly anomalously low.

The Powertap data fall above even my highest analytic power estimate. This is as they should, since as I noted my analytic estimate assumed uniform speed and grade, and thus underestimate wind resistance near the beginning and end of the segment. Wind resistance is superlinear, so underestimates during faster than average portions are of a lower magnitude than overestimates during slower than average portions.

So what do I learn from this? Basically you shouldn't trust Strava power estimates. But if you do care about them, you should make sure clothing + equipment mass is included in bike mass. I had not done this. It all adds up: toolbag, pump, water bottles, clothing, shoes, helmet: you'd probably be surprised at the result if you bundled it all up and put it on a scale.

Comments